If you’ve ever built or evaluated multi-agent LLM systems, you’ve hit the same bottleneck:

agents collaborate by dumping text back and forth.

This works, but comes with structural problems:

- The context window grows with every reasoning step

- Latency increases as agents serialize → tokenize → parse text

- Reasoning traces become bloated and expensive

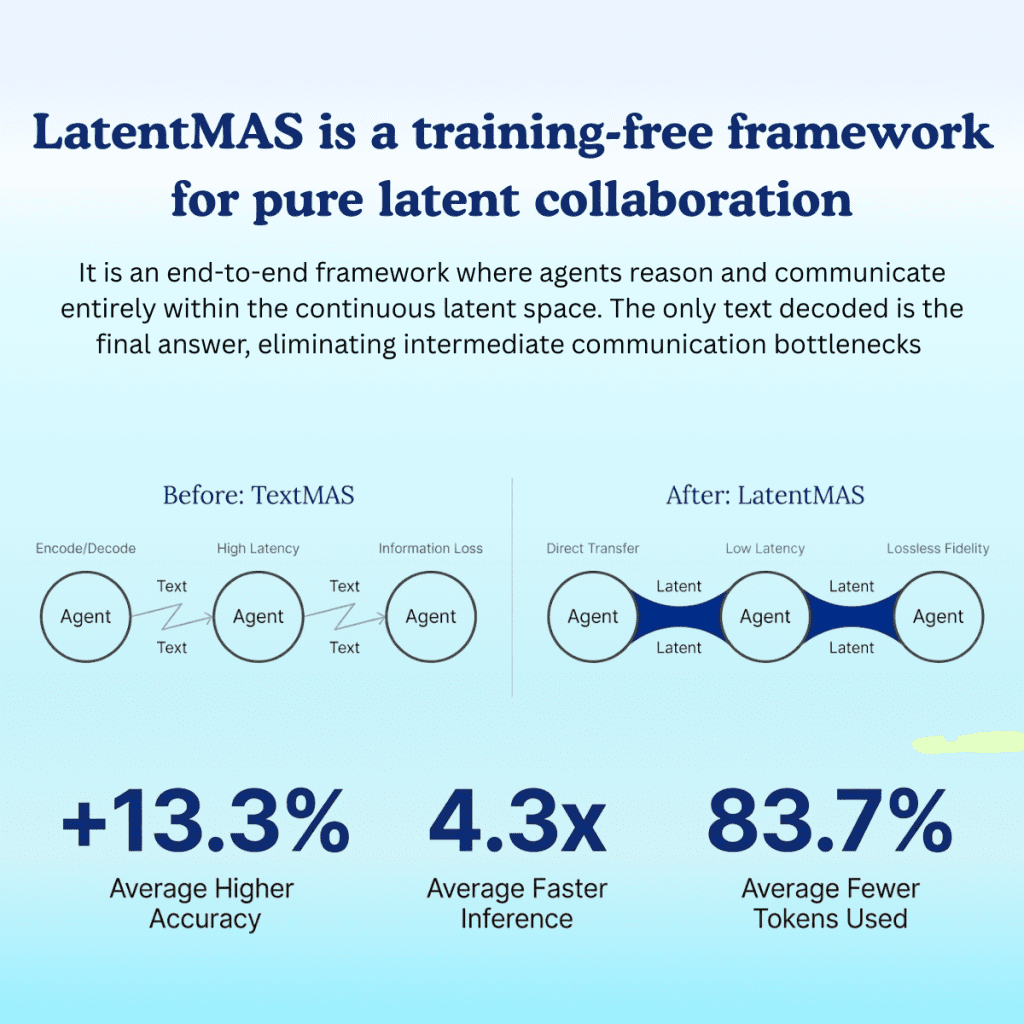

LatentMAS proposes a fundamentally different inter-agent communication model:

skip the token channel completely and operate directly in latent space.

Below, we break down its architecture, performance characteristics, practical constraints, and implications for real-world LLM systems.

1. Token-Level communication to latent-state exchange

Classic MAS architecture looks like this:

Agent A → generate_tokens() → Agent B → encode() → reasoningLatentMAS replaces that chain:

Agent A → hidden_state → Agent B → hidden_state reasoningInstead of generating tokens, each LLM runs forward propagation up to a selected layer and passes:

- final hidden layer embeddings

- key/value attention cache

- positional embeddings

- residual state

These vectors are then injected directly into the next agent’s forward pass.

In other words:

- Agents don’t talk in language

- They share internal representations

This transforms inter-agent collaboration into a latent-level protocol, not a text-level protocol.

Architectural consequence

Latent exchange functions like an internal memory bus:

| Traditional MAS | LatentMAS |

|---|---|

| expensive external API | zero-token internal handoff |

| serializes reasoning into text | keeps reasoning in vector space |

| parsing/formatting overhead | direct state transfer |

This changes the basic economics of MAS: the bottleneck is no longer tokens.

2. Performance profile

LatentMAS is validated across:

- math + logic tasks (GSM8K, AQuA)

- reasoning (HellaSwag, BBH)

- code generation (HumanEval+)

And the gains are not marginal:

| Metric | LatentMAS vs Text-MAS |

|---|---|

| Token usage | 50–80% reduction |

| End-to-end latency | 3–7× faster |

| Accuracy | consistently improved |

It’s not a “speed-up hack.”

It changes the computing cost model itself:

- Reduced decoding

- Reduced context expansion

- Reduced prompt formatting

The cost scales with latent forward passes, not token throughput.

3. Zero-training implementation (The most unusual part)

Most latent-communication proposals require model fine-tuning or special pre-training.

LatentMAS doesn’t.

It works on standard HF models:

- Qwen, Qwen-3, Mistral, etc.

- No checkpoint modifications

- No alignment training

The key building blocks are:

- Access to hidden states (HF supports this by default)

- Transfer between agents

- Re-inject into the next agent as embeddings

- Only decode at the end

This makes it deployable in existing stacks.

What this means for engineers?

- No retraining.

- No special hardware.

- No custom models.

This makes the idea immediately actionable, not just research-grade.

4. Hybrid inference pipeline

The authors don’t just propose a theory — they modify real inference engines.

The recommended architecture:

HF backend → latent rollouts

vLLM backend → final decodingWhy?

- HF supports embedding-level prompt injection

- vLLM is significantly faster for token decoding

But vLLM does not support latent prompting natively.

So they partially patch the backend to:

- bypass tokenizer I/O

- accept latent cache state

- enable KV-cache transfer

This is a strong practical signal:

LatentMAS was designed for deployment, not just publication.

5. Implementation

You can think of the computation as two loops:

Latent rollout loop

state = agent.forward(input_ids)

for step in range(n):

state = agent.latent_step(state)

broadcast(state)Optional verbalization

final_answer = agent.decode(state)

return final_answerThis architecture removes three expensive cycles:

- tokenization

- parsing

- re-encoding

The pipeline becomes:

latent → latent → latent → decode onceInstead of:

tokenize → decode → tokenize → decode → ...6. Limitations and tradeoffs

This isn’t magic. Engineers should consider:

Debuggability

Latent traces are opaque.

You can’t simply log:

Agent says: “I think step 3 is wrong”You need latent-logging or vector-space visualization.

Model heterogeneity

Works best when:

- Same model family

- Same hidden-state format

- Same positional embedding scheme

Cross-model communication is currently non-trivial.

Engine dependencies

Modified vLLM backend → extra maintenance cost.

For reproducibility and benchmarking:

use HF backend first.

LatentMAS changes the unit of communication:

- not text,

- not tokens,

- but latent semantics.

This unlocks a different future design space:

- many-agent collaboration without context explosion

- hierarchical agent architectures

- multi-step plans without token overhead

- vector-level planning loops

For the first time in MAS research, token cost is not the limiting factor.

7. How to get started with LatentMAS

If you want to experiment:

Github link to LatentMAS: https://github.com/Gen-Verse/LatentMAS

- Use an HF model that exposes hidden states

- Pass embeddings between agents directly

- Only decode once

- If deploying at scale, consider hybrid HF+vLLM

That’s it.

Conclusion

LatentMAS suggests a paradigm where multi-agent collaboration happens in the same representational space the model uses to think, not the space humans use to communicate. We may be witnessing the first major step toward MAS architectures that are compute-efficient, reasoning-dense, and no longer bound by token channels.