Before 1979, every business depended on a back office most people never saw.

Rows of accounting clerks, heads down, pencils moving across 13-column ledger paper. Work moved like an assembly line — one person responsible for one column’s worth of calculations, then passing it to the next. Change a single cost assumption, revise one revenue figure, and the entire sheet had to be redone. From the beginning. By hand.

What we’d now call a simple “what-if” calculation could take a full team of clerks twenty hours to complete. (This is a spreadsheet from the 70s)

VisiCalc — the first spreadsheet program for personal computers launched in October 1979. It became the first “killer app” in history. So revolutionary that people bought the $2,000 Apple II (the only computer it ran on) just to run the $100 application.

Almost overnight, the disruption followed. Work that once required teams and weeks of recalculation could now be done by a single person in minutes.

The United States lost roughly 400,000 accounting clerk jobs between 1980 and 2000. But over the same period, the number of accountants grew by roughly 600,000.

One accountant, after seeing an early VisiCalc demonstration, reportedly began shaking and said:

“I spent all week doing that.”

The sudden realization that an entire category of labor has just become obsolete — is the moment engineering teams are experiencing today.

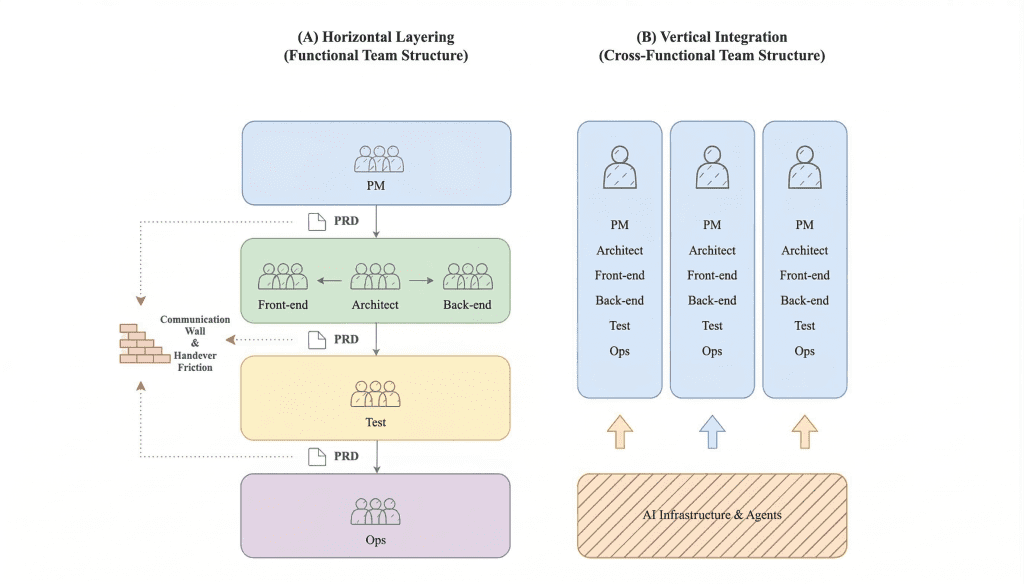

Team topologies: Vertical splicing vs. Horizontal silos

For two decades, software teams have operated a lot like those old accounting offices.

Work moves through the organization in stages. Product managers write requirements and pass them to developers. Developers write code and pass it to QA. QA tests and sends issues back for fixes. Every handover between layers created what we now call a Communication Wall — a point where context gets lost, friction accumulates, and velocity drops.

Comparison of organizational structures — Traditional Horizontal Layering vs. AI-Driven Vertical Integration enabling end-to-end ownership

In 2026, the leading engineering organizations have moved to Vertical Integration. Instead of silos, we have small, cross-functional pods where a single unit owns a feature end-to-end. This shift is only viable at scale because of AI coding agents. Tools like Claude Code and Cursor act as connective tissue between the frontend, backend, and infrastructure layers. Rather than assigning a “Frontend Task” or a “Backend Task,” teams now assign a “Vertical Feature” — and one senior lead can oversee a pod that delivers a complete, working product slice in a single cycle.

Recent research on distributed engineering productivity consistently shows that removing handover friction produces a significant reduction in wasted engineering time — with teams redirecting that recovered capacity toward product logic rather than coordination overhead.

The economics of AI: Why headcount math has changed

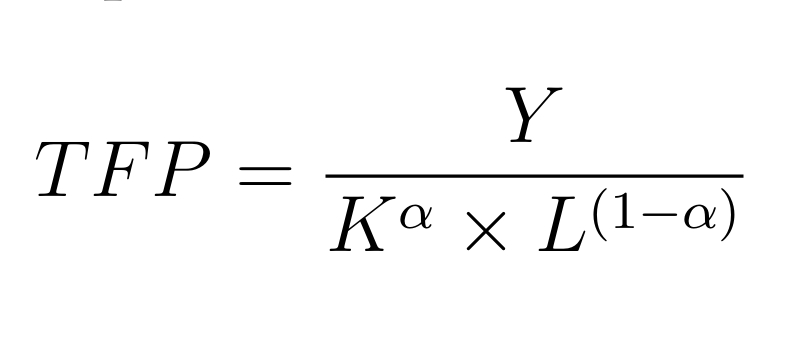

Total Factor Productivity (TFP) measures how efficiently a set of inputs produces output. In software terms: how much product does your team ship per unit of effort?

FP formula — Where Y is Total Output, K is Capital, L is Labor (Scale), and α is Capital's share of output

In the pre-AI era, the answer to ‘ship more’ was almost always ‘hire more.’ Output scaled linearly with headcount. AI fundamentally alters this economic logic:

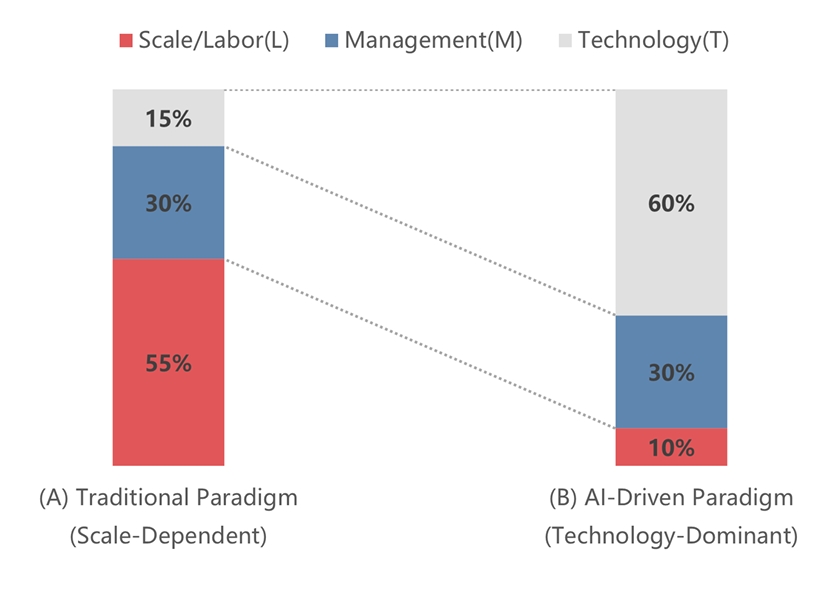

- Output multiplier. AI acts as a direct multiplier on output — boosting Y without a proportional increase in L. The same team ships significantly more.

- Coordination quality over quantity. The nature of management has shifted. AI reduces logistical scheduling complexity, but it increases the stakes of architectural decisions. Managerial focus moves from running standups to making high-leverage calls about system design, long-term scalability, and technical liability.

- Diminishing returns on headcount. Adding more people to an AI-native team can now actively hurt productivity. When agents handle the connective work, additional humans reintroduce the very coordination costs that AI eliminated. The optimal team structure is smaller and more deliberate — what leading organizations now call an Atomic Team.

Changing weights of the TFP components in the AI era

The question is no longer “how many engineers do we need?”

It’s “how do we structure a small, high-trust pod with the right agent infrastructure to move at machine speed?”

Build-Test-Iterate at speed: The 36-hour feature cycle

The traditional software development lifecycle was sequential. Write code. Wait for QA. Fix bugs. Wait for review. Ship — maybe — two weeks later.

AI-enabled software development has collapsed this into a continuous loop.

With multi-agent workflows, the Code → Test → Iterate cycle now runs in minutes, not days. Agents build a feature, run unit tests, self-correct on failures, and generate documentation in parallel — all without a dedicated QA phase. Continuous inference has replaced the checkpoint model.

The 36-Hour Feature Cycle

What used to be a two-week sprint now fits inside a 36-hour micro-cycle:

- Day 1: Human defines architectural intent

- Day 2: Agentic execution with Human-in-the-Loop (HITL) refinement

The speed is real. But it creates a new pressure point: if an agent completes a feature in two hours and then waits two days for a human pull request review, the bottleneck hasn’t moved — it’s just been relocated.

Optimising for review latency is now as important as optimising for build speed.

The new rules of Product Management in AI-enabled software development

Agentic development doesn’t make the PM role smaller — it makes it harder in different ways. When the team can execute at machine speed, the bottleneck becomes the quality of decisions, not the pace of execution.

- Rule 1: Optimize for review latency

In the horizontal model, the bottleneck was throughput: getting code written fast enough. In the vertical model, the bottleneck is review latency. How quickly can a human evaluate what the agent has built, identify architectural concerns, and make a go/no-go call? That is what you optimize for. - Rule 2: Architecture is the code

The PM’s job has shifted to editing architecture. You’re checking whether the agent’s implementation violates systemic integrity, creates technical debt, or conflicts with decisions made six months ago that the agent doesn’t know about. That requires deep product and system context. It cannot be delegated.

At this speed, context drift becomes the dominant failure mode.

Context management: 3-Layer memory stack

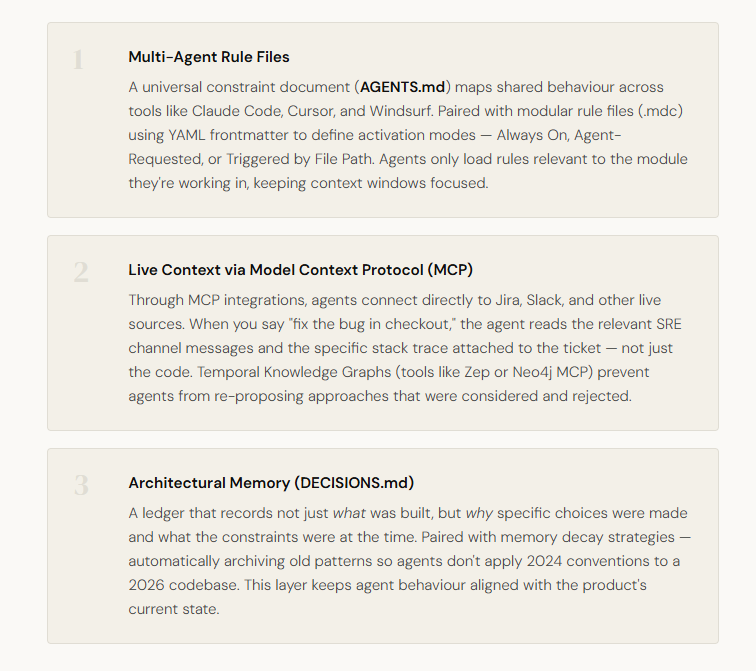

The teams shipping most reliably in 2026 have moved beyond a single source of truth. They operate a structured, three-layer memory hierarchy that keeps agents aligned with architectural reality.

The horizontal model served software teams well for twenty years because human communication overhead was the binding constraint. AI has removed that constraint — and in doing so, has made the old structure not just inefficient, but counterproductive.

The teams that adapt fastest will be the ones with the clearest architecture, the tightest pods, and the most deliberate context infrastructure.

And just like accounting departments in 1980, the teams that adapt early will look strange at first.

A few years later, they will simply look obvious.

What is the impact of AI on software engineering teams in 2026?

AI is acting as a direct productivity multiplier on engineering output, enabling smaller, vertically integrated pods to ship complete product features in 36-hour micro-cycles — work that previously required full sprints and multiple siloed teams.

What is a vertical integration model for software teams?

A vertical integration model assigns a small, cross-functional pod end-to-end ownership of a feature — from frontend to backend to infrastructure — replacing the traditional horizontal handover model (PM → Dev → QA) that creates communication walls and velocity loss.

How do AI coding agents like Claude Code change team structure?

AI coding agents like Claude Code and Cursor act as connective tissue across layers of the stack, enabling one senior lead to oversee a complete feature pod. They reduce coordination overhead, run continuous build-test-iterate loops, and allow teams to operate at machine speed.

What is context drift in AI-native development?

Context drift occurs when AI agents operate without awareness of past architectural decisions, leading them to propose solutions that were previously considered and rejected. It is the dominant failure mode in agentic engineering workflows and is mitigated through structured memory hierarchies.

About InteligenAI

Most companies know AI can transform how they operate. Few have the in-house expertise to build it the right way.

InteligenAI bridges that gap. We are a custom AI development company and AI product studio that partners with businesses to design, build, and launch AI solutions that actually work in production.

From defining the right use case to deploying and scaling the final product, we are your trusted technical partner at every step. The result: enterprise-grade AI systems that are secure, reliable, and built to grow with your business.